INTRODUCTION

Progress testing has been recognized as an important feedback tool for students, faculties, program directors, and schools.1 Progress testing results help identify critical gaps in curriculum development, and point to necessary corrections.2 Utilizing detailed feedback and online tools for performance monitoring, several studies have demonstrated the benefits of progress testing for student learning3-6, as well as for educational program management.7-9

By contrast, few studies have examined how feedback for Progress Test-item writers is generated, or even if it is offered. Previous literature has focused on item development procedures and item construction guidelines,10-12and several studies have strongly demonstrated the efficacy of faculty development programs for developing better items and more reliable exams.13-15Furthermore, even though a large number of studies have suggested the need for faculty development programs with longitudinal monitoring of evaluative processes,16-18few papers have provided feedback for item writers.19,20This concern is especially important because it has been clearly demonstrated that flawed items compromise the validity and reliability of a test.21

The Brazilian use of progress testing has a formative characteristic. Several school consortia use progress testing to provide students with feedback; this is comprised of an exam booklet with the answer template, and appropriate commentaries, with related bibliography. This is a great opportunity for student self-assessment and, posteriorly, study guidance. However, this strategy limits the possibility of having a large item database with the repetition of previously used items. Accordingly, faculty members are often asked to write new items. And what happens to these items? The purpose of this report is to present a school’s set of initiatives that helped improve item writers’ skill through feedback regarding the fate of the items written by faculty members.

EXPERIENCE REPORT

Progress Testing Methods

Since 2005, a consortium of public medical schools, mainly from the state of São Paulo, have administered a progress testing exam to all of their students. This consortium is called NIEPAEM - Núcleo Interinstitucional de Estudos e Práticas de Avaliação em Educação Médica (Interinstitutional Group for Studies and Assessment Practices in Medical Education). It now includes the following schools: UNICAMP, UNESP, USP (Ribeirão Preto and Bauru campi), UNIFESP, UFSCAR, FAMEMA, FAMERP, UEL, and FURB.22

The consortium employs a standardized blueprint, suitable for newly-qualified physicians. The exam has 120 multiple-choice questions, divided equally between six subject areas: basic sciences, internal medicine, pediatrics, surgery, obstetrics and gynecology, and public health. Every year, NIEPAEM creates a set of item orders that address the blueprint. Each school is represented at the NIEPAEM meetings by a faculty member. This faculty member is responsible for the exchange of information between their school and the others, as well as for delivering item orders to his faculty colleagues, who will be responsible for writing the required items. A single item order, therefore, might have up to ten written items. Afterwards, several specialists from the consortium schools hold a meeting to select the items that will comprise the final exam. This panel of specialists makes changes to the items to improve their quality.

For item writing, faculty members are asked to complete a standard form. The form requires the item writers to: state the item order, offer 4 answer choices (A, B, C, and D) with only one correct answer, and mark the correct answer with an “A”. All items should have appropriate commentaries and related bibliographic references. Negative propositions are discouraged (i.e. “tick the wrong alternative”), as well as “all of the above are correct.” Many of these practices are based on the previous Canadian and Dutch experiences.23,24

Item Writing Flaws

In 2017, one of the schools from NIEPAEM had a concern regarding the quality of the delivered items. Despite always providing the item writers with guidelines for writing good items, several faculty members would send flawed items. Many items were not used; the time and efforts of item writers and specialist reviewers was, therefore, wasted. The first task, then, was to quantify the problem in terms of delivered items.

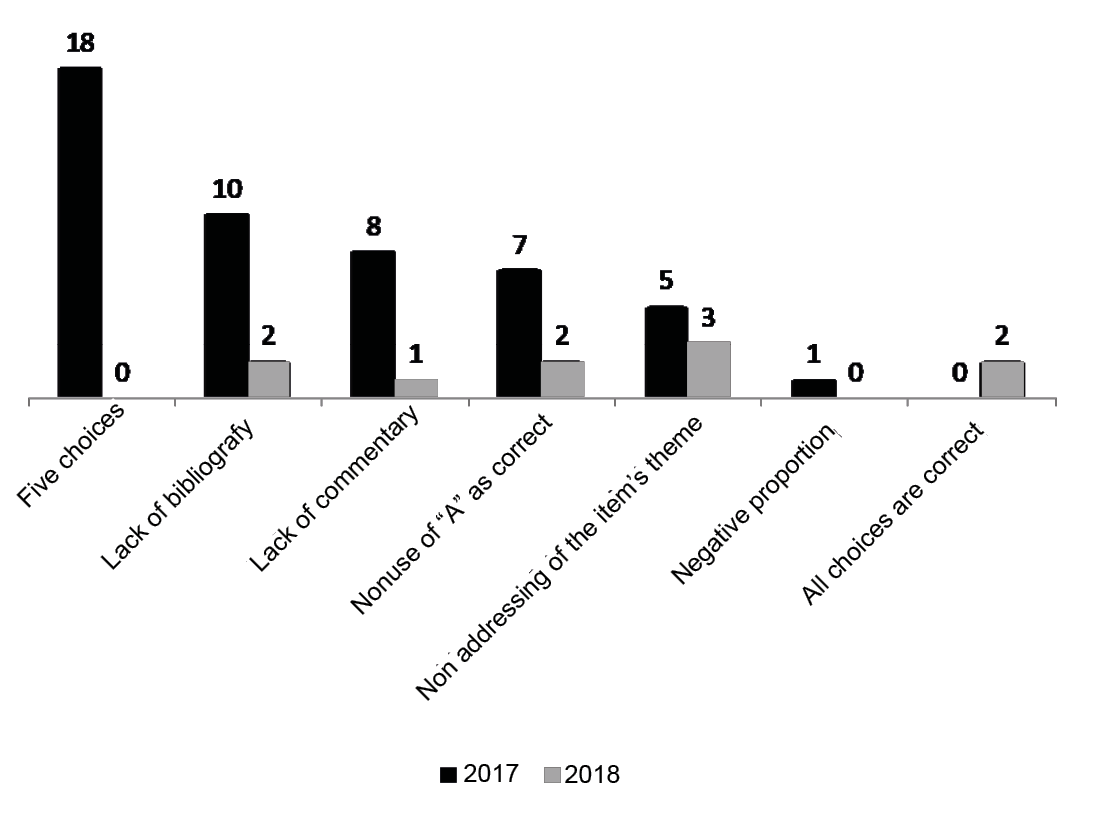

In that year, 158 item orders were delivered to the representative of each department in the school. Of these, 126 items were completed. 87 items (69%) did not have any problems, and these were taken to the specialist review board. The remaining 39 items had at least one problem. The detected problems were: five-choice questions (18), lack of bibliographic reference (10), lack of commentary (8), nonuse of “A” as the correct answer (7), non-addressing of the item theme (5), and negative proposition (1). Of the 87 items taken to the item selection meeting, 28 were accepted for use in the final exam (23.3% of the 120 items).

The Fate of the Items

After the exam had been applied, and the results were obtained, the school’s local committee on progress testing submitted a report to the community. This report included an analysis of the test results, focusing on the students’ performance.

The report also included individualized feedback for the item writers whose items had been used in the exam. This feedback covered: quality of writing, changes made by the specialists’ panel , students’ global performance on the item, psychometric indices of difficulty and discrimination, performance of the 6th-year students, and the comparison of students’ performance from the same school and from different schools. Additionally, a brief explanation on the psychometric indices was added to the report. Many faculties reported great satisfaction on receiving this feedback, especially because it was the first time they had been given such information.

Finally, the report was delivered to all faculty members; it included information regarding flaws detected in item writing. This information also included a breakdown of the distribution of problems according to subject area: 10 on obstetrics and gynecology, 8 on public health, 3 on pediatrics and on surgery, and none on internal medicine and basic sciences. It did not take into account five-choice errors and nonuse of “A” errors, since these problems could be easily corrected by the local committee.

This analysis was a matter of concern for some departments of the school, mainly those identified with item writing problems. Meetings with the local committee were held, in order to better discuss and debate the data.

The Following Year

In 2018, the same protocol for item writing was used. Each department representative received the item orders from the local committee on progress testing. This member requested the item writing for his colleagues. Of the 161 items ordered, 117 were delivered. Of these, 107 (94%) had no problems and were taken to the panel of specialists for the item selection meeting. Only 10 problems were detected: 3 were not sequentially ordered, 2 did not use “A” as the correct answer, 2 were missing bibliography, 2 used “all of the above are correct”, and 1 did not include any commentary. Figure 1 shows the distribution of flawed item over the two years.

In 2018, of the 107 items taken to the panel of specialists for analysis, 26 were used in the final exam.

The distribution of flawed item among the six areas was: 4 on surgery, 2 on public health, 1 on internal medicine and pediatrics, and none on basic sciences and obstetrics and gynecology. Again, five choices and nonuse of “A” problems were not taken into account, since these problems could be easily corrected by the local committee.

The comparison of flawed item and accepted items showed an inverse relationship between flaws and acceptance; that is, the higher the number of detected flaws, the lower the number of items accepted for the final exam (see Figure 2).

DISCUSSION

The assessment of students’ knowledge is a challenge for medical education. Several domains of professionalism need to be accurately assessed and, for this purpose, multimethod and longitudinal assessments must be performed. Multiple-choice questions are helpful for the assessment of knowledge, but they are difficult to write, especially in certain content areas, such as mental health, ethics, and humanity sciences. Despite this difficulty, multiple-choice questions can provide highly reliable data, and they are routinely used all over the country.25

Therefore, improving faculty members’ writing skills is critical for determining a precise scale to discriminate between high and low-achieving students. Many studies have evaluated the effect of faculty development programs on item writing, but most of these comprise lower-level Kirkpatrick model,15 and there is evidence demonstrating that item writing flaws can harm the outcome of high-stake examinations.26-28

In this report, we present an institutional experience with a school-based feedback system that improved the quality of item writing for the progress testing exam. A broad evaluation, highlighting flawed item, discomfited many faculty members and departments. A certain degree of embarrassment may have motivated them to improve the quality of the requested items in the following year. This seems to have occurred especially in the areas of obstetrics and gynecology and public health. Obviously, a more direct and individualized assessment of the reactions and behavior changes of each item writer would have strengthened our observations.

In 2002, Downing recommended giving feedback to item authors29; however, we could find no reports about the implementation of this suggestion, which makes our work a novel one. Accordingly, Kim et al. (2010) suggested that organic principles that reflect the item writers’ trial-and-error process would benefit them more than “Dos and Don’ts Principles”.30

Some of our data also offer good news concerning our school’s adoption of guidelines for item writing, such as the avoidance of negative propositions and “all of the above” answers, which showed a low frequency among the problems we detected, and had been identified as a particular error to be avoided.27,31

Another limitation of our study is that our sample might have been biased because we enrolled only faculty members involved with progress testing item writing. We cannot overlook the fact that other faculty members might use flawed items in regular classroom examinations. However, it is important to be aware that a wave of change begins with conscientious and motivated faculties.

Finally, giving feedback to progress testing item writers will add another arm to progress testing feedback possibilities, improving its usefulness for medical school.

After this first observation, we hope that other investigators will: open their eyes to the opportunities offered by the frequent requests for item writing, provide faculty members with systematic feedback regarding flaws and students’ performance on each written item, and rigorously assess the impact of this set of practices to improve the quality of high-stake examinations.

CONCLUSIONS

Our work showed a significant decrease in the number of flawed items. It also showed an increase in the number of items eligible for use in progress test examinations after the disclosure of flaws and the fate of items. Giving feedback to faculty item writers seems to be a good strategy for developing faculty item writing proficiency.